How the brain's global organization, not any single region, gives rise to the mind's remarkable unity

Why do people who excel in one cognitive domain tend to excel in all of them? For over a century, this pattern puzzled scientists. Generations of neuroimaging studies lit up scan after scan, highlighting the frontal lobes, the parietal cortex, specific networks thought to be the home of our cognitive power — yet none could explain why intelligence behaves like a unified property of the whole mind, rather than a collection of isolated skills.

A new study from the University of Notre Dame offers a compelling answer — and it lies not in any particular brain region, but in the way the entire brain is organized. Published in Nature Communications in January 2026, the research by Professor Aron Barbey and lead author Ramsey Wilcox tested four concrete predictions of the Network Neuroscience Theory, analyzing brain imaging data from 831 adults in the Human Connectome Project alongside an independent sample of 145 adults. What they found reframes intelligence as a property of the system itself — a consequence of how efficiently, flexibly, and coherently the brain's networks coordinate with one another.

We spoke with Ramsey Wilcox, graduate student and lead author of the study, about what it means to shift the question from "where is intelligence?" to "how is the brain organized?" — and what that shift reveals about the nature of mind.

Image courtesy of the Decision Neuroscience Laboratory, University of Notre Dame.

The Conversation

Localization techniques have provided a wealth of information regarding regions that play a central role in mediating specific functions such as motor, vision, attention, and cognitive control processes, among others. However, complex behavior like adaptive problem-solving, a core facet of intelligence, is not a "cognitively pure" operation. It depends on a highly orchestrated "dance" among many different elements.

For example, solving a complex math puzzle requires visual processing to read the numbers, working memory to hold them in mind, retrieval of mathematical rules from long-term memory, and executive functions to sequence steps to solve the problem. No single region can accomplish all of these operations on its own. Instead, it is important to examine how many different regions, responsible for mediating many different basic functions, cooperate to accomplish complex and nuanced tasks.

How these regions cooperate depends heavily on the global architecture of the brain, which subsequently affects all cognitive processes, giving rise to the positive manifold. Localization-based approaches were missing this critical integrative piece — missing the forest for the trees, for lack of a better phrase.

With traditional neuroimaging analyses, the focus was primarily on localization, measuring isolated levels of neural activation. If a participant performed a task in the scanner, researchers looked for which regions showed the highest task-evoked activation. This was thought to indicate the importance of a specific region for supporting the cognitive operations the task was designed to require.

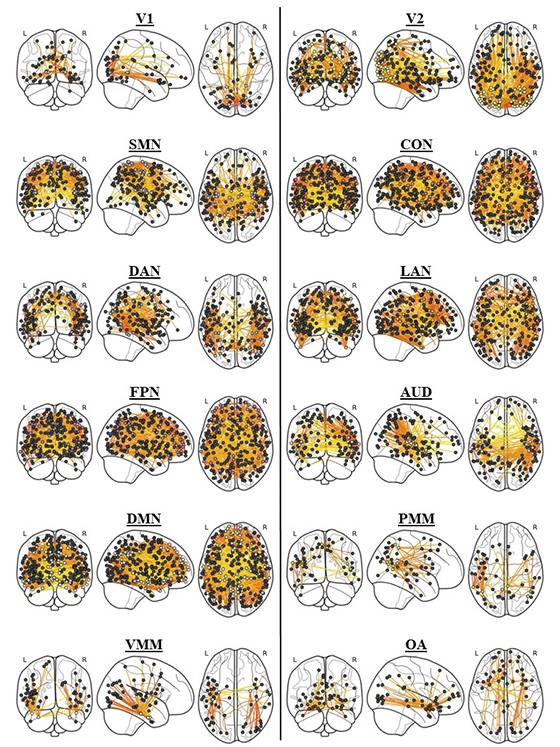

Today, the field increasingly recognizes that no single brain region operates in isolation. Biologically, the brain is a complex, heavily interconnected system. Distinct cortical areas are linked by structural white matter "highways" that traverse long physical distances, facilitating rapid communication. Because of this massive integration, it is highly unlikely that higher-order cognitive functions are housed in isolated pockets. Rather, they emerge from the coordinated activity of these widespread networks, which are subsequently shaped by the brain's global architecture. The dependence of different forms of complex cognition on this global architecture accounts for the positive manifold.

Shifting the question to "how the system is organized" has led us to adopt network-based and topological measurement tools, often utilizing graph theory. Instead of just looking at the magnitude of local activation, these tools measure network properties like network efficiency and small-worldness. This shift in measurement fundamentally changes our interpretation. It allows us to explain not just where activity happens, but how the brain's structural and functional architecture integrates information, and how its intrinsic organization primes it to support complex, dynamic cognitive states.

For efficiency, we define this term with respect to the organization of connections within a network. What we are really talking about is the "ease of information flow" throughout the network — how difficult it is for a signal to travel from one region to another by virtue of the paths constructed by its connections. In a theoretical network where all regions are connected to all other regions, efficiency is incredibly high because a signal only needs to traverse one connection. Real brains cannot afford the metabolic cost of being fully connected, so they rely on "small-world" network properties to keep efficiency high and organize information flow through the system. We quantify efficiency by calculating the characteristic path length — the average number of connections in the shortest path between all pairs of nodes.

Flexibility is a bit more complicated. Broadly speaking, I think of flexibility with respect to how the brain's structural architecture constrains and facilitates transitions between different functional states. There is an exciting direction in Network Neuroscience that appeals to Network Control Theory to help explain this relationship. Part of the theory's formalization quantifies the energetic cost required to transition between different patterns of neuronal activation as a function of the constraints imposed by the structural topology. Measuring how much "energy" is required to make a transition serves as a measure of flexibility. Brains requiring less energy to move between diverse cognitive states can be considered more flexible.

If I had to pick, I would say distributed processing was the most difficult due to its "big data" nature. The number of measurable brain connections vastly outnumbers the participants we can realistically recruit for our studies. In statistics, this high-dimensional dilemma is often called the p > n problem, where the number of features exceeds the number of participants. This makes fitting models difficult because you do not have enough observations for a traditional regression model to converge on a solution. We therefore have to appeal to machine learning techniques to overcome this issue.

The advantage of machine learning is that it utilizes regularization techniques, which effectively penalize or remove connectivity features, allowing the model to finally converge on a solution. However, this requires careful fine-tuning of hyperparameters, which has major effects on model performance. Machine learning models are incredibly powerful and will find any pattern in the data that relates to your outcome of interest — the tricky part is figuring out whether this pattern is idiosyncratic to your sample, a trap known as overfitting, or if it truly generalizes to unseen data. We therefore use rigorous cross-validation techniques to ensure our model can generalize to other samples.

As for what surprised me most — given our study was theoretically motivated, relying on concrete predictions rather than exploratory ones, I do not think any finding was surprising in the conventional sense. We were fairly convinced by the state of the literature that these predictions would prove correct. But it is always deeply exciting when your analyses work out the way you envisioned them.

It is likely a combination of all three. First, the physical, hardwired anatomical connections emanating from a hub inherently constrain and influence the other brain areas they directly connect to. At the same time, our brain is constantly evolving as we experience new things. The consolidation of prior learning is reflected in physical changes to the brain's structural architecture. The mechanism by which we incorporate new information involves updating the relative strength of these connections through neuroplasticity.

Imagine engaging in a difficult new computer game. As you first learn the mechanics, it requires intense cognitive effort. But as you gain experience, it becomes second nature — a "learned configuration" that has optimized the network based on the knowledge you have gained.

Finally, this is a highly dynamic, context-dependent process. Regulatory hubs are not "wedded" to engaging a specific set of regions for a specific context. They can flexibly change their communication strategies based on the demands at hand. Hubs in the fronto-parietal control network are a perfect example. Depending on the task, a communication hub will actively change which regions it speaks to. FPN hubs will increase communication with the Default Mode Network when you turn your attention inward to recall past memories, but will rapidly shift to increase communication with the Dorsal Attention Network when you need to focus on stimuli in your direct external environment.

Executive control is a cognitive function, whereas system-wide coordination refers to the intrinsic organization of the brain's connections that allows cognition to unfold in the first place. A small-world architecture introduces segregation into the system by establishing modules — sub-networks — that can autonomously carry out basic neural operations. At the same time, these modules are not fully isolated; they communicate with one another, integrating these basic operations to support higher-order forms of cognition.

Notice that we are not talking about cognition itself — the active processing of information — but rather the structural organization that allows diverse forms of information processing to occur. We are talking about setting the groundwork, the enabling constraints, that allow neural activity to execute cognitive processes in a context-dependent manner.

Think of your house and the network of pipes that supply water to various rooms. The structural integrity and layout of the plumbing establish the foundation through which water can dynamically flow, giving you access to it when and where you need it. However, the pipes themselves do not propel the water. Turning a faucet to actively control the volume and direction of the water toward a specific goal is akin to executive function. The pipes — coordination — dictate what flow is possible, while the faucet — executive control — dictates the flow that actually occurs.

The fundamental topological architecture certainly plays a massive role in the manifestation of individual differences in general intelligence. If we consider that general learning requires the rapid assimilation and integration of novel, multi-domain information, it makes sense that individuals with highly efficient, flexible system-level architectures are better equipped to adapt to new cognitive demands.

However, it is crucial to remember that these are correlational analyses, which do not warrant strict causal inferences. Furthermore, there are most likely other factors at play across different biological scales. At the molecular level, features like microstructural integrity — the myelination, or "insulation," of white matter tracts — and metabolic efficiency are critical variables that prior studies consistently implicate in cognitive performance. That being said, these levels of analysis are not mutually exclusive. It is highly probable that lower-level molecular features contribute to cognition primarily because they assist with the development and deployment of the system-level properties we observe.

Conclusion

What Wilcox and his colleagues have accomplished is more than a technical advance in brain imaging methodology. They have offered a new way of asking an old question — and in doing so, reframed what intelligence actually is.

Intelligence, in this account, is not a thing stored anywhere. It is not a region, not a network, not a module. It is a property that emerges when the brain's many specialized systems are organized to communicate with exceptional efficiency, transition between states with minimal effort, and coordinate across the full breadth of the cortex without losing local precision. It is, in essence, an architectural achievement.

The implications extend outward in several directions. For neuroscience, the findings help explain why intelligence changes so predictably across the lifespan — developing broadly in childhood, declining with aging, and proving uniquely sensitive to diffuse brain injury — in each case because large-scale coordination, not isolated function, is what shifts. For artificial intelligence, the study raises a pointed question: if human general intelligence arises from system-level organization rather than a dedicated general-purpose processor, then scaling specialized capabilities may not be enough to achieve it in machines.

And for the rest of us, there is something quietly profound in the idea that what makes a mind capable is not the power of any single part, but the elegance with which all the parts work together.